Department of Computer Science and Engineering, Michigan State University

Department of Computer Science and Engineering, Michigan State UniversityI am a Ph. D. student in the Department of Computer Science and Engineering, Michigan State University. My current research is under the supervision of Prof. Zeng, in the INSS lab. Before that, I got my master degree from University of Chinese Academy of Sciences, in Institute of Computing Technology, under the supervision of Dr. Yao. I got my bachelor degree from Beijing University of Technology.

My research interest focus on:

• Wireless Sensing: Multimodal sensing using signals like mmWave, Lidar and visible light.

• Deep Learning: Neural networks for sensing and planning.

Warning

Problem: The current name of your GitHub Pages repository ("Solution: Please consider renaming the repository to "

http://".

However, if the current repository name is intended, you can ignore this message by removing "{% include widgets/debug_repo_name.html %}" in index.html.

Action required

Problem: The current root path of this site is "baseurl ("_config.yml.

Solution: Please set the

baseurl in _config.yml to "Education

-

Michigan State of UniversityDepartment of Computer Science and Engineering

Michigan State of UniversityDepartment of Computer Science and Engineering

Ph.D. StudentAug. 2025 - present -

University of Chinese Academy of SciencesM.S. in Computer ScienceSep. 2022 - Jul. 2025

University of Chinese Academy of SciencesM.S. in Computer ScienceSep. 2022 - Jul. 2025 -

Beijing University of TechnologyB.S. in Electronic EngineeringSep. 2018 - Jul. 2022

Beijing University of TechnologyB.S. in Electronic EngineeringSep. 2018 - Jul. 2022

Honors & Awards

-

Third Prize in National College Student Innovation and Entrepreneurship Annual Conference2022

-

Outstanding of Graduation in Beijing Univerisity of Technology2022

News

Selected Publications (view all )

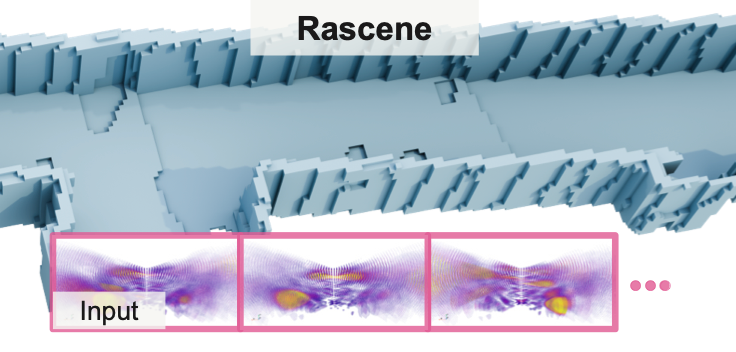

Rascene: High-Fidelity 3D Scene Imaging with mmWave Communication Signals

KunZhe Song, Geo Jie Zhou, Xiaoming Liu, Huacheng Zeng

The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2026

Rascene: High-Fidelity 3D Scene Imaging with mmWave Communication Signals

KunZhe Song, Geo Jie Zhou, Xiaoming Liu, Huacheng Zeng

The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2026